Is testing bounded?

Testing seems like an activity whose outcome is definitive i.e., PASS or FAIL. Seems right? Hmmm, if expectations, specifications, intentions were clear then definitive outcomes i.e., met/not-met seems fair. What if that is not true i.e. specifications/expectations are vague/fuzzy, not stated, is not complete, or discovered/fine-tuned on the fly?

Systems are complex & therefore testing too

A software system is not a just collection of discrete parts/features but an interesting bundle of interactions with itself with other systems and environment, users in different situations/context. Is it possible to clearly list out intentions/expectations in these myriad interactions? Also, a system is continuously evolving with new features added, existing ones enhanced, issues fixed and code refactored for betterment. Hence system entropy increases continuously (2nd Law of Thermodynamics and SW engineers attempt to manage this complexity. This is what smart test folks focus on, to lower risk of failure, and in the process find binary answers(met/not-met). Testing is no more a simple problem of judging (aka checking), it is a complex problem-solving activity of analysing, understanding, questioning, hypothesising, proving and also evaluating/judging.

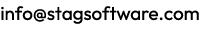

Problem solving approaches

What does it take to solve a complex problem, especially in the case of testing that is really unbounded? How does one do contextual analysis, review, design, evaluation, automation and assess systems built rapidly and constantly evolving? Is it by using techniques, following a process, technology?

Well, It takes a multi-faceted approach to solve complex problems:

- Technique/formula to solve precisely

- Principle(s) to make good choices

- Guidelines to steer in right direction

- Frameworks/Models to abstract well

- Process to do activities consistently

- Using prior experience or heuristics

- Explore, learn, course correct constantly

- Creativity – thinking out-of-box

- Luck/chance via random/ad hoc doing

Detailing these approaches

#1 TECHNIQUE/FORMULA

A technique is a particular method of doing an activity, usually a method that involves practical skills. [Collins dictionary] whilst a formula is generally a fixed pattern that is used to achieve consistent results, a recipe. [Vocabulary.com]

Bet you can relate this easily to estimate effort/time, design scenarios/test cases, measure code complexity and so on. Technique/formula is great as they help you solve any problem easily and clearly without ambiguity, all one has to do is to choose the right technique/formula.

#2 PRINCIPLE

A principle is a proposition or value that is a guide for behavior or evaluation. [Wikipedia]

Here are some of them that we use

- It is not testing later, but getting involved early

- Quality is everyone’s job

- Decompose to understand well

- Focus on the critical few (Pareto principle) and so on.

Principles are not as precise as that technique/formula, they help you make choices.

#3 GUIDELINE(S)

A guideline is general rule, principle, or piece of advice, it gives you a sense of direction [Oxford Dictionary]. Test with real customer data before release, do validate interesting corner cases, use coverage to assess complex code, use code smells are some guidelines.

#4 EXPERIENCE/HEURISTICS

Experience is knowledge or skill gained by doing a job or activity for a long time.

[Collins English Dictionary] whilst Heuristics are strategies derived from previous experiences with similar problems. [Wikipedia]. These are deep personal knowledge gleaned from experiences and codified as patterns, anti-patterns.

#5 EXPLORATION

This is about trying to discover; learn about. [Cambridge dictionary]. This is not cookie cutter stuff, this is about walking around, exploring the software system in terms of how it works, how it is constructed, how data flows around. how users interact, how it interacts with environment, how I can use/abuse it and so on. It is staying curious, to question, to experiment to understand, to know what you still do not know. This is very useful in all the phases of test lifecycle commencing from understanding to evaluation.

#6 CREATIVITY

This is approaching a problem or challenge from a new perspective, alternative angle, or with an atypical mindset. [sessionlab.com]. This approach by the very word is unbounded, not done by following a strict method/process. We all have done “out-of-box” thinking, which is really creative thinking. Of not doing anything strictly logical or from first principles, but seemingly inspired by solutions from other domains.

#7 RANDOM/AD-HOC

Random is choosing or happening without a method or pattern.[Macmillan Dictionary] whist Ad hoc is not planned in advance, done only because a particular situation has made it necessary. [Collins English Dictionary]. This approach is the other extreme, of relying on lady luck, or chance to solve problems. Chaos, randomness is a powerful tool especially in evaluating software as we tend to be too organised in how we do this, what data sets we use and so on. Stimulating variant usage is also involving more people to evaluate software which we know is very useful for alpha/beta test.

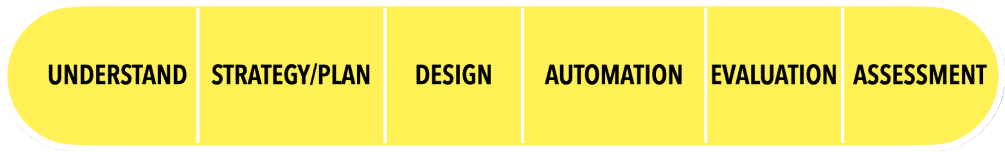

In which test lifecycle activities are they applied in?

The test lifecycle consists of activities commencing from understanding intents/expectations to finally assessing the system.

| Understand | Understand needs & expectations – who, what (features, requirements, flows, components), what-for (criteria), how much, linkages, environment, stage (new, fix, enhancement), where |

| Strategy/plan | Approach – Test what (entities) & what-for (test types), how (test techniques & tools), how well (metrics for progress, quality) |

| Design | Scenarios, cases and data sets |

| Automation | Scripts |

| Evaluation | Evaluating, enhancing scenarios/cases, learning, exploring |

| Assessment | Judging progress, quality, fitness |

So how we perform each of these activities in the lifecycle? We use a judicious mix of techniques, principles, guidelines, models, heuristics, woven together by a process that would also encompass exploration, creativity and doing random/ad-hoc in each of these test lifecycle activities.

In conclusion…

It takes Smart QA to tackle the unbounded, lower the entropy and contain the risk. One of the facets of Smart QA is about understanding various problem-solving approaches and judiciously using a meaningful mix for the context.

In closing here are two questions for you:

Q1 – How do YOU test complex systems rapidly?

Q2 – What problem solving approaches do YOU USE in your testing life cycle?

Mull over it and relate it to the seven approaches to problem solving outlined above.